1. Introduction

In the contemporary landscape of residential living, the evolution of smart home technology stands as a testament to human innovation and the relentless pursuit of enhanced living experiences. Smart homes are defined as integrated living environments that leverage the Internet of Things (IoT), computational technologies, automation controls, visual presentation systems, and communication protocols within the domestic sphere. Once confined to the realm of science fiction, smart homes have now become integral to strategic energy planning and national policy [1]. They promise not only heightened convenience and personalized comfort but also significant advancements in energy efficiency and home automation.

As we stand on the brink of a new era in home management and personal comfort, the market adoption of smart home technologies depends on the extent to which perceived benefits outweigh potential risks—a balance that is continuously recalibrated through innovative solutions and evolving consumer perceptions.

1.1. The Integral Role of Smart Cleaning Robots

Building on the foundation of smart home technology, smart cleaning robots have become increasingly integral to enhancing the efficiency and convenience of modern living. Smart home systems, as outlined by Li et al. [1], provide a comprehensive framework of interconnected technologies that facilitate the integration of advanced automation solutions, including robotic cleaning systems. Early explorations in robotics, such as the CARMEL project by Congdon et al. [2], laid the groundwork for these innovations by introducing foundational approaches to environmental perception and navigation using vision sweeps. The concept of smart cleaning robots has since evolved from theoretical constructs to practical tools that utilize advanced sensors, AI algorithms, and autonomous navigation to maintain cleanliness within homes. These robots have transformed the mundane task of cleaning into an automated process, saving time and elevating the standard of cleanliness through consistent and thorough operations.

The benefits of smart cleaning robots are manifold, with time savings being a primary advantage. By taking over routine cleaning duties, these robots free homeowners to engage in more productive or enjoyable activities compared to using hand-operated vacuums, thus improving overall quality of life. Furthermore, the consistent cleaning patterns provided by robots ensure a higher standard of cleanliness, contributing to a healthier living environment. This is particularly beneficial for individuals with allergies or other health conditions sensitive to indoor pollutants.

Smart cleaning robots have now become a common feature in many smart home ecosystems, offering a glimpse into a future where domestic tasks are fully automated. As these robots continue to evolve with improved AI capabilities and adaptive learning, their potential within the smart home is boundless. Future iterations are expected to integrate more seamlessly with other smart home devices, offering personalized cleaning schedules and advanced navigation systems capable of covering larger or more complex living spaces without supervision. The ongoing development and integration of smart cleaning robots within the smart home ecosystem underscore their vital role in shaping the future of domestic automation and convenience.

1.2. Camera-Based Vision Systems

The advent of camera-based vision systems has marked a significant leap in the capabilities of floor-cleaning robots, transforming them from basic vacuum cleaners into intelligent cleaning agents. These systems, powered by high-resolution cameras and advanced image processing algorithms, have revolutionized how robots perceive and interact with their environment. The integration of cameras enables robots to capture detailed visual data of the floor, allowing them to detect dirt spots with precision, regardless of the floor’s color or texture [3]. This technology is particularly crucial for industrial applications where cleaning quality and efficiency are paramount.

Dirt detection is approached as a single-class classification problem, utilizing unsupervised online learning through a Gaussian Mixture Model (GMM) that represents the floor pattern. This method has proven effective in identifying dirt spots on various floor types, surpassing state-of-the-art approaches, especially for complex floor textures [4]. Furthermore, to enhance the usability of floor-cleaning robots in real-world scenarios, a more general algorithm has been introduced. This technique employs spectral residual filtering to isolate areas of dirt and residual noise, effectively removing standard background elements within the image. This significantly extends the applicability of cleaning robots in diverse environments [3].

In addition to GMMs, deep learning frameworks such as YOLOv5 have been employed to detect dirty spots, aiming to conserve energy and resources. Canedo et al. [5] demonstrated a robust vision system utilizing YOLOv5, achieving a mean average precision (mAP) of 0.874 for detecting solid dirt. Their work highlighted the effectiveness of synthetic data for training and the potential application of this framework in real-world scenarios. The cleaning system activates only when dirt is detected, with resources allocated proportionally to the area requiring cleaning [6].

The development of camera-based vision systems for floor-cleaning robots exemplifies continuous innovation in robotics and artificial intelligence. As these technologies advance, so does the potential for robots to perform cleaning tasks with greater autonomy, intelligence, and efficiency.

1.3. Identifying the Challenges in Smart Cleaning Robotic

The field of smart cleaning robotics, while significantly advanced, is not without its challenges. A primary concern lies in the inefficiencies of current cleaning patterns, where robot vacuums often struggle to optimize their cleaning routes, leading to wasted time and energy. This issue is particularly evident in the redundant, daily comprehensive cleaning cycles that many robots perform, regardless of the actual dirt levels in their environment.

Moreover, the problem of redundant cleaning is compounded by the lack of adaptability in many existing floor-cleaning robots. These robots often fail to differentiate between varying levels or types of dirt, including liquid waste, treating all areas with the same cleaning intensity. This one-size-fits-all approach is not only inefficient but also consumes excessive power, diminishing the overall effectiveness of the cleaning process. Grünauer et al. [4] demonstrated the potential of unsupervised learning methods, such as Gaussian Mixture Models (GMM), to enhance the adaptability of cleaning robots. Their approach enabled robots to identify specific dirt spots even in complex environments, paving the way for more resource-efficient and targeted cleaning.

To address these challenges, there is an increasing demand for adaptive and intelligent cleaning solutions. Robots must evolve to incorporate advanced sensors, AI algorithms, and machine learning capabilities that allow them to analyze their surroundings and adjust their cleaning strategies accordingly [7]. By detecting dirt levels and identifying high-priority areas, these robots can focus their efforts where they are most needed, while also conserving resources for critical tasks. The development of such intelligent systems is essential for the future of smart cleaning robotics. These advancements promise not only improved cleaning performance but also a more efficient and sustainable approach to household maintenance.

2. New Proposed System - VisionSweep

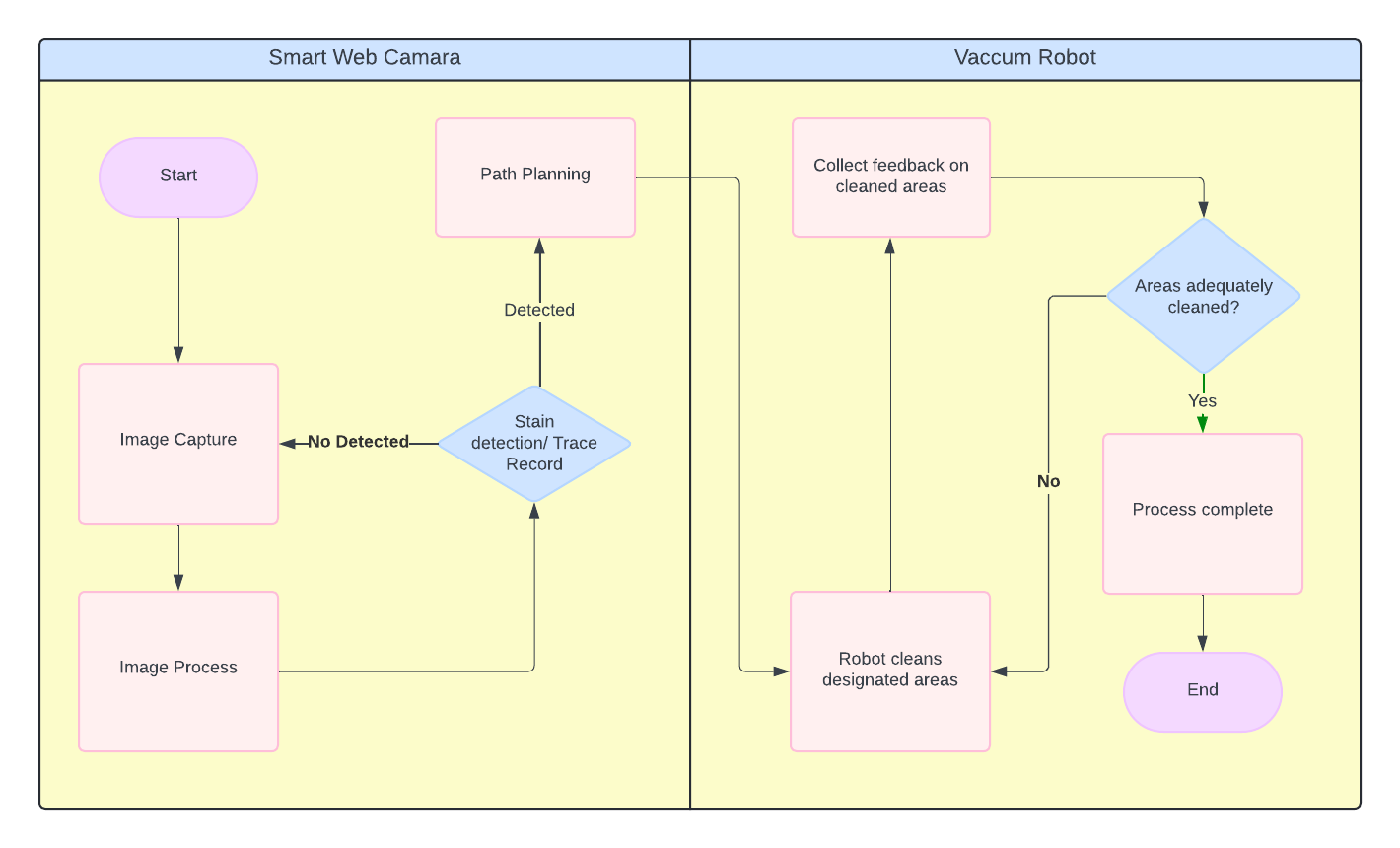

The VisionSweep system (refer to Schematic Figure 1) utilizes a web camera to capture indoor images, documenting human movement patterns and identifying dirt on the floor. The system processes these images to detect stains and record areas frequently traversed by individuals. Once stains are identified, the system maps their positions along with human traffic paths onto a room layout to facilitate efficient route planning. The generated route plan is then transmitted to the vacuum robot (refer to the flowchart in Figure 2).

Figure 1: Web camera detecting dirt/trash and human movement communicates with vacuum robot

Figure 2: Flow chart demonstrating system working process

The vacuum robot executes the cleaning tasks based on the received route plan, focusing on the designated areas. This approach enables the system to prioritize cleaning zones with detected dirt while ensuring high-traffic areas are effectively cleaned, thereby enhancing the overall cleanliness of the room. Below is a detailed description of the system's operation:

2.1. Image Capture and Image Processing

The VisionSweep system employs a state-of-the-art deep learning algorithm for object detection. Among the most effective models in the industry is the Single Shot Detector (SSD) architecture, renowned for its high accuracy in detecting various objects, including dirt and stains [8].

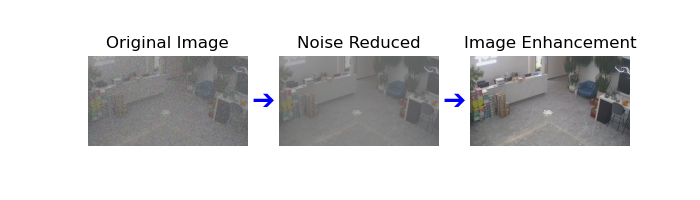

After image capture, the system applies a series of preprocessing steps to enhance image quality and prepare it for input into the SSD model. First, noise reduction techniques are used to minimize random visual noise that can interfere with object detection accuracy. This may include applying filters, such as Gaussian or median filters, to smooth out irregularities and improve image clarity [9]. Next, image enhancement techniques [10] are employed to further improve the quality of the preprocessed images by adjusting brightness, contrast, and sharpness. These combined processes ensure that the visual data is optimal for object detection, enabling better differentiation between objects and background elements and ultimately improving the overall accuracy and performance of the SSD model.

Once preprocessing is complete, the optimized images are input into the SSD model. SSD performs object detection by simultaneously localizing and classifying objects in a single pass through the network [8]. This method allows SSD to detect objects at multiple scales using different feature maps, making it particularly effective for real-time applications. In the VisionSweep system, the SSD model is deployed via a web camera to capture images of the indoor environment. These images are processed to identify and classify objects, such as dirt and human movement patterns. By leveraging SSD MobileNet, a lightweight and optimized variant, the system achieves high detection accuracy while minimizing computational overhead. This enables the vacuum robot to prioritize cleaning in soiled and high-traffic areas, thereby enhancing cleaning efficiency and optimizing resource utilization.

2.2. Path Calculation

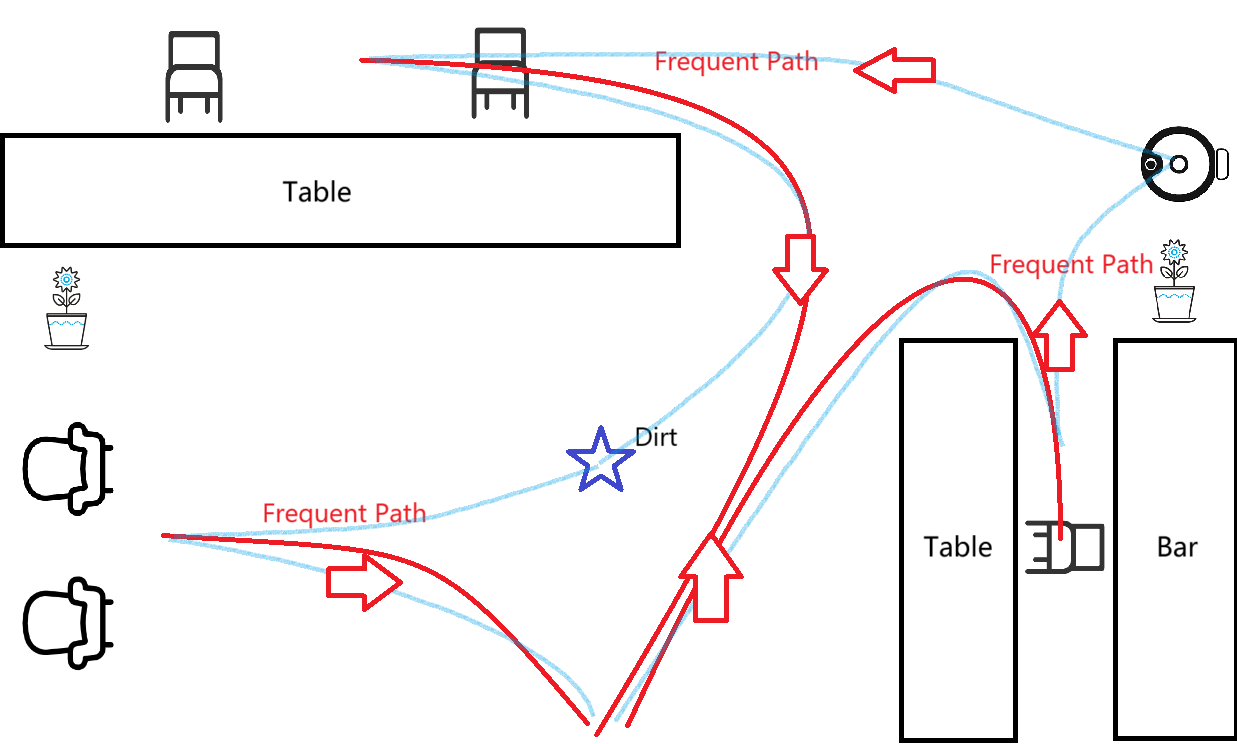

Initially, the robot constructs a map of the room utilizing data from its onboard sensors and the pre-existing floor plan. This map is fundamental for navigation and facilitates the robot's understanding of the room layout [11]. Concurrently, the web camera captures indoor images, applies algorithms to correct image distortions, and employs deep learning techniques to generate a two-dimensional representation of the room.

Subsequently, map alignment is performed by using the base station as a shared reference point for both the robot vacuum and the web camera. As the position of the base station is known in both coordinate systems, this fixed location is utilized to align the robot vacuum’s map with the web camera’s map through translation and rotation operations, enabling a low-precision overlap of the two maps [12], [13].

Once the room map is established and aligned with the camera-generated map, the robot employs pathfinding algorithms, such as Dijkstra’s, to determine the most efficient route to areas where dirt is detected. This path planning considers obstacles, high-traffic zones, and dirt locations, ensuring prioritized cleaning in frequently used areas. Research by Hao et al. [14] demonstrates that integrating efficient pathfinding with automatic recharging path planning can significantly enhance the robot’s operational longevity and minimize downtime. Additionally, Balakrishnan et al. [15] highlight the value of incorporating deep learning algorithms to dynamically adjust cleaning priorities based on detected dirt levels and high-traffic zones, enabling more targeted and resource-efficient cleaning. Dijkstra’s algorithm operates by calculating the shortest path between nodes based on cost metrics, allowing the robot to navigate efficiently while optimizing energy usage. This combined approach ensures comprehensive and prioritized coverage, enhancing the robot’s cleaning performance and resource efficiency [14].

2.3. Vacuum Robot

Once the path is generated and transmitted to the robot, it navigates to the designated areas to perform cleaning tasks. Upon reaching the approximate locations identified by the web camera, the robot uses its onboard sensors to precisely locate stains and dirt. This ensures thorough cleaning in high-priority areas. After completing the cleaning tasks, the robot autonomously returns to the base station, ready for its next operation

3. Experiment Result

The VisionSweep system is designed to deliver a structured and effective cleaning solution by leveraging advanced image processing, object detection, and path planning. The anticipated outcomes for each phase of the process are as follows:

3.1. Image Processing

When images are captured by the web camera under various lighting conditions, the preprocessing pipeline includes noise reduction followed by image enhancement. In the example shown, the initial image undergoes noise reduction to minimize visual distortions, followed by enhancement to improve clarity and brightness. These steps ensure the final processed image is clear and ready for accurate object detection. Refer to Figure 3.

Figure 3: Image Processing progress

3.2. Object Detection

After preprocessing, the SSD model is expected to accurately detect relevant objects such as dirt spots and areas of human activity. For instance, the system should identify specific dirt locations and distinguish them from other objects or background noise, as well as recognize high-traffic zones based on detected human traces. This detection is critical for mapping and prioritizing areas that require intensive cleaning. Refer to Figure 4.

Figure 4: Trash detected on floor

3.3. Path Planning

Once dirt locations and high-traffic areas are mapped, the path planning algorithm (e.g., Dijkstra’s) generates an optimized cleaning route that considers these priorities. The route is expected to minimize redundant travel and energy usage while ensuring comprehensive coverage of the room. A sample output from this step may include a visualized path, illustrating how the robot navigates to efficiently cover detected dirt areas and high-traffic zones. Refer to Figure 5.

Figure 5: Path Planning Schematic Diagram

3.4. Cleaning Execution

Following path generation, the vacuum robot navigates to the designated areas and uses its onboard sensors to precisely locate and clean the identified stains or dirt. This step ensures thorough cleaning of high-priority zones. Upon completing its tasks, the robot autonomously returns to its base station, signaling successful task completion and readiness for the next operation.

Overall, The VisionSweep system is expected to significantly enhance cleaning efficiency by focusing on the most soiled and trafficked areas. Through precise, adaptive path planning and targeted cleaning actions, it conserves resources while achieving a high standard of cleanliness.

4. Conclusion

In conclusion, VisionSweep offers a structured and effective solution to address the inefficiencies and limitations of current smart cleaning systems. By integrating web camera vision with advanced image processing and path optimization, VisionSweep prioritizes high-traffic and heavily soiled areas for cleaning. The proposed system tackles key challenges faced by existing robotic cleaning solutions, such as redundant cleaning cycles and limited adaptability, through the use of the SSD model for accurate detection and Dijkstra’s algorithm for efficient path planning. Although experimental validation remains pending, VisionSweep establishes a solid foundation for advancing smart home cleaning technology. Future research could focus on system optimization, the integration of additional sensors, and extensive real-world testing to validate and enhance its performance across diverse home environments.